Next to "Canvas" at the top, click "SPL". Navigate to “Build Pipeline”, and select “Read from Splunk Firehose”. 3) Visualize the HEC Event in a DSP Pipe - Using SPLv2 As when we build, preview and activate the DSP Pipe, we’re going to need to hit "Send" again a few more times. Once you get the "Success" messages, keep Postman open. Hit "Send", and you should be rewarded with a "Success" message. This is where we’re going to create our event. Next, click on the "Body" tab, select “ raw” from the dropdown below and select “JSON”. Note: Ignore the Auth tab, we’re not using that. In fact, you can use the command line and curl if you prefer, I just use Postman as it will form my requests into scripts for me easily.Ĭreate a POST request in Postman, under “Headers”, set the Authorization header to your DSP HEC token prefixed with Splunk. Your token has been created, and you can now use it to send HTTP Events to your endpoint. You will get a message back with your token details - Note: Save these! You can’t retrieve tokens from the system! scloud ingest post-collector-tokens -name testtoken -tenant default scloud login to ensure everything is working, if you receive no errors, you can run the following to generate a HEC token: Note: scloud version 3 or greater is required for this step!

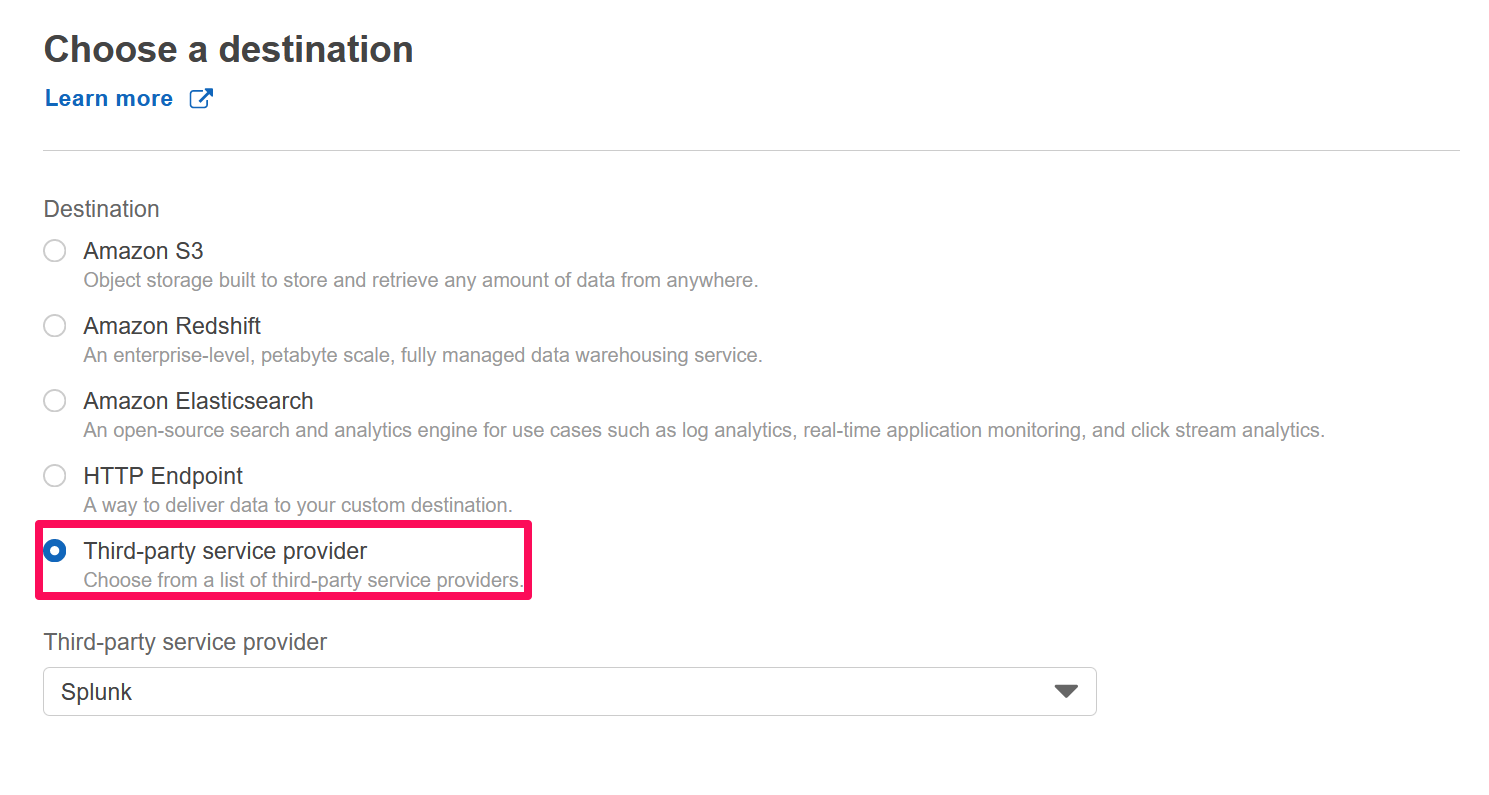

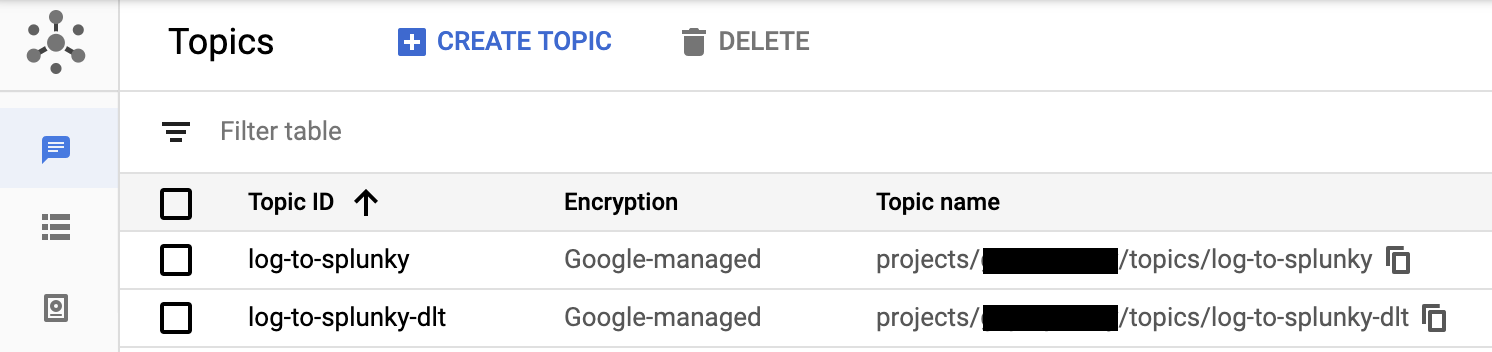

Form a HTTP Post event using Postman and send it to DSP.High-level view of current supported DSP sources and sinks - the ones we use in this blog are highlighted. During the processing stage, the Splunk DSP allows you to perform complex transformations and troubleshooting on your data before that data is indexed. Splunk DSP is a data stream processing service that processes data in real-time and sends that data to your platform of choice. Ok, so what is DSP, and where does this blog fit into the product? Port requirements - The DSP HEC listens on port 31000.A DSP environment that sends data to Splunk.So, if you’ve got a bunch of heavy forwarders whose only job is to collect these HTTP events and send them onto Splunk, this blog is probably for you! Pre-requirements: You’re probably familiar with Splunk’s HTTP Event Collector which works in a similar fashion, but has the added advantage of running across a Kubernetes cluster. One of which was the ability to receive events over HTTP. Data Stream Processor (DSP) version 1.1 brought in some cool new features.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed